Expressive Facial Gestures from Motion Capture Data

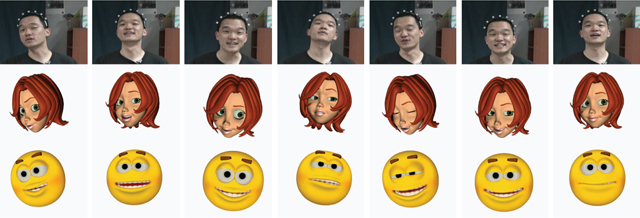

Human facial gestures often exhibit such natural stochastic variations as how often the eyes blink, how often the eyebrows and the nose twitch, and how the head moves while speaking. The stochastic movements of facial features are key ingredients for generating convincing facial expressions. Although such small variations have been simulated using noise functions in many graphics applications, modulating noise functions to match natural variations induced from the affective states and the personality of characters is difficult and not intuitive. We present a technique for generating subtle expressive facial gestures (facial expressions and head motion) semiautomatically from motion capture data. Our approach is based on Markov random fields that are simulated in two levels. In the lower level, the coordinated movements of facial features are captured, parameterized, and transferred to synthetic faces using basis shapes. The upper level represents independent stochastic behavior of facial features. The experimental results show that our system generates expressive facial gestures synchronized with input speech.

Eunjung Ju, Jehee Lee, Expressive Facial Gestures from Motion Capture Data, To appear in Eurographics 2008

Paper download [pdf] (3.5M)

Video download [mov] (83M)